mirror of

https://github.com/cyberarm/w3d_hub_linux_launcher.git

synced 2026-03-22 12:16:15 +00:00

Compare commits

5 Commits

752bd2b026

...

f2cd26dda3

| Author | SHA1 | Date | |

|---|---|---|---|

| f2cd26dda3 | |||

| 11e5b578a1 | |||

| 7f7e0fab6a | |||

| a0ff6ec812 | |||

| 840bc849d3 |

3

Gemfile

3

Gemfile

@@ -1,7 +1,8 @@

|

|||||||

source "https://rubygems.org"

|

source "https://rubygems.org"

|

||||||

|

|

||||||

gem "base64"

|

gem "base64"

|

||||||

gem "excon"

|

gem "async-http"

|

||||||

|

gem "async-websocket"

|

||||||

gem "cyberarm_engine"

|

gem "cyberarm_engine"

|

||||||

gem "sdl2-bindings"

|

gem "sdl2-bindings"

|

||||||

gem "libui", platforms: [:windows]

|

gem "libui", platforms: [:windows]

|

||||||

|

|||||||

59

Gemfile.lock

59

Gemfile.lock

@@ -1,8 +1,35 @@

|

|||||||

GEM

|

GEM

|

||||||

remote: https://rubygems.org/

|

remote: https://rubygems.org/

|

||||||

specs:

|

specs:

|

||||||

|

async (2.35.1)

|

||||||

|

console (~> 1.29)

|

||||||

|

fiber-annotation

|

||||||

|

io-event (~> 1.11)

|

||||||

|

metrics (~> 0.12)

|

||||||

|

traces (~> 0.18)

|

||||||

|

async-http (0.92.1)

|

||||||

|

async (>= 2.10.2)

|

||||||

|

async-pool (~> 0.11)

|

||||||

|

io-endpoint (~> 0.14)

|

||||||

|

io-stream (~> 0.6)

|

||||||

|

metrics (~> 0.12)

|

||||||

|

protocol-http (~> 0.49)

|

||||||

|

protocol-http1 (~> 0.30)

|

||||||

|

protocol-http2 (~> 0.22)

|

||||||

|

protocol-url (~> 0.2)

|

||||||

|

traces (~> 0.10)

|

||||||

|

async-pool (0.11.1)

|

||||||

|

async (>= 2.0)

|

||||||

|

async-websocket (0.30.0)

|

||||||

|

async-http (~> 0.76)

|

||||||

|

protocol-http (~> 0.34)

|

||||||

|

protocol-rack (~> 0.7)

|

||||||

|

protocol-websocket (~> 0.17)

|

||||||

base64 (0.3.0)

|

base64 (0.3.0)

|

||||||

concurrent-ruby (1.3.5)

|

console (1.34.2)

|

||||||

|

fiber-annotation

|

||||||

|

fiber-local (~> 1.1)

|

||||||

|

json

|

||||||

cri (2.15.12)

|

cri (2.15.12)

|

||||||

cyberarm_engine (0.24.5)

|

cyberarm_engine (0.24.5)

|

||||||

gosu (~> 1.1)

|

gosu (~> 1.1)

|

||||||

@@ -14,17 +41,39 @@ GEM

|

|||||||

ffi (1.17.0)

|

ffi (1.17.0)

|

||||||

ffi-win32-extensions (1.1.0)

|

ffi-win32-extensions (1.1.0)

|

||||||

ffi (>= 1.15.5, <= 1.17.0)

|

ffi (>= 1.15.5, <= 1.17.0)

|

||||||

|

fiber-annotation (0.2.0)

|

||||||

|

fiber-local (1.1.0)

|

||||||

|

fiber-storage

|

||||||

|

fiber-storage (1.0.1)

|

||||||

fiddle (1.1.8)

|

fiddle (1.1.8)

|

||||||

gosu (1.4.6)

|

gosu (1.4.6)

|

||||||

i18n (1.14.7)

|

io-endpoint (0.16.0)

|

||||||

concurrent-ruby (~> 1.0)

|

io-event (1.14.2)

|

||||||

|

io-stream (0.11.1)

|

||||||

ircparser (1.0.0)

|

ircparser (1.0.0)

|

||||||

|

json (2.18.0)

|

||||||

libui (0.2.0-x64-mingw-ucrt)

|

libui (0.2.0-x64-mingw-ucrt)

|

||||||

fiddle

|

fiddle

|

||||||

logger (1.7.0)

|

logger (1.7.0)

|

||||||

|

metrics (0.15.0)

|

||||||

mutex_m (0.3.0)

|

mutex_m (0.3.0)

|

||||||

ocran (1.3.17)

|

ocran (1.3.17)

|

||||||

fiddle (~> 1.0)

|

fiddle (~> 1.0)

|

||||||

|

protocol-hpack (1.5.1)

|

||||||

|

protocol-http (0.57.0)

|

||||||

|

protocol-http1 (0.35.2)

|

||||||

|

protocol-http (~> 0.22)

|

||||||

|

protocol-http2 (0.23.0)

|

||||||

|

protocol-hpack (~> 1.4)

|

||||||

|

protocol-http (~> 0.47)

|

||||||

|

protocol-rack (0.20.0)

|

||||||

|

io-stream (>= 0.10)

|

||||||

|

protocol-http (~> 0.43)

|

||||||

|

rack (>= 1.0)

|

||||||

|

protocol-url (0.4.0)

|

||||||

|

protocol-websocket (0.20.2)

|

||||||

|

protocol-http (~> 0.2)

|

||||||

|

rack (3.2.4)

|

||||||

rake (13.3.1)

|

rake (13.3.1)

|

||||||

releasy (0.2.4)

|

releasy (0.2.4)

|

||||||

bundler (>= 1.2.1)

|

bundler (>= 1.2.1)

|

||||||

@@ -35,6 +84,7 @@ GEM

|

|||||||

rubyzip (3.2.2)

|

rubyzip (3.2.2)

|

||||||

sdl2-bindings (0.2.3)

|

sdl2-bindings (0.2.3)

|

||||||

ffi (~> 1.15)

|

ffi (~> 1.15)

|

||||||

|

traces (0.18.2)

|

||||||

websocket (1.2.11)

|

websocket (1.2.11)

|

||||||

websocket-client-simple (0.9.0)

|

websocket-client-simple (0.9.0)

|

||||||

base64

|

base64

|

||||||

@@ -51,12 +101,13 @@ PLATFORMS

|

|||||||

x64-mingw-ucrt

|

x64-mingw-ucrt

|

||||||

|

|

||||||

DEPENDENCIES

|

DEPENDENCIES

|

||||||

|

async-http

|

||||||

|

async-websocket

|

||||||

base64

|

base64

|

||||||

bundler (~> 2.4.3)

|

bundler (~> 2.4.3)

|

||||||

cyberarm_engine

|

cyberarm_engine

|

||||||

digest-crc

|

digest-crc

|

||||||

excon

|

excon

|

||||||

i18n

|

|

||||||

ircparser

|

ircparser

|

||||||

libui

|

libui

|

||||||

ocran

|

ocran

|

||||||

|

|||||||

15

README.md

15

README.md

@@ -1,14 +1,21 @@

|

|||||||

|

|

||||||

|

|

||||||

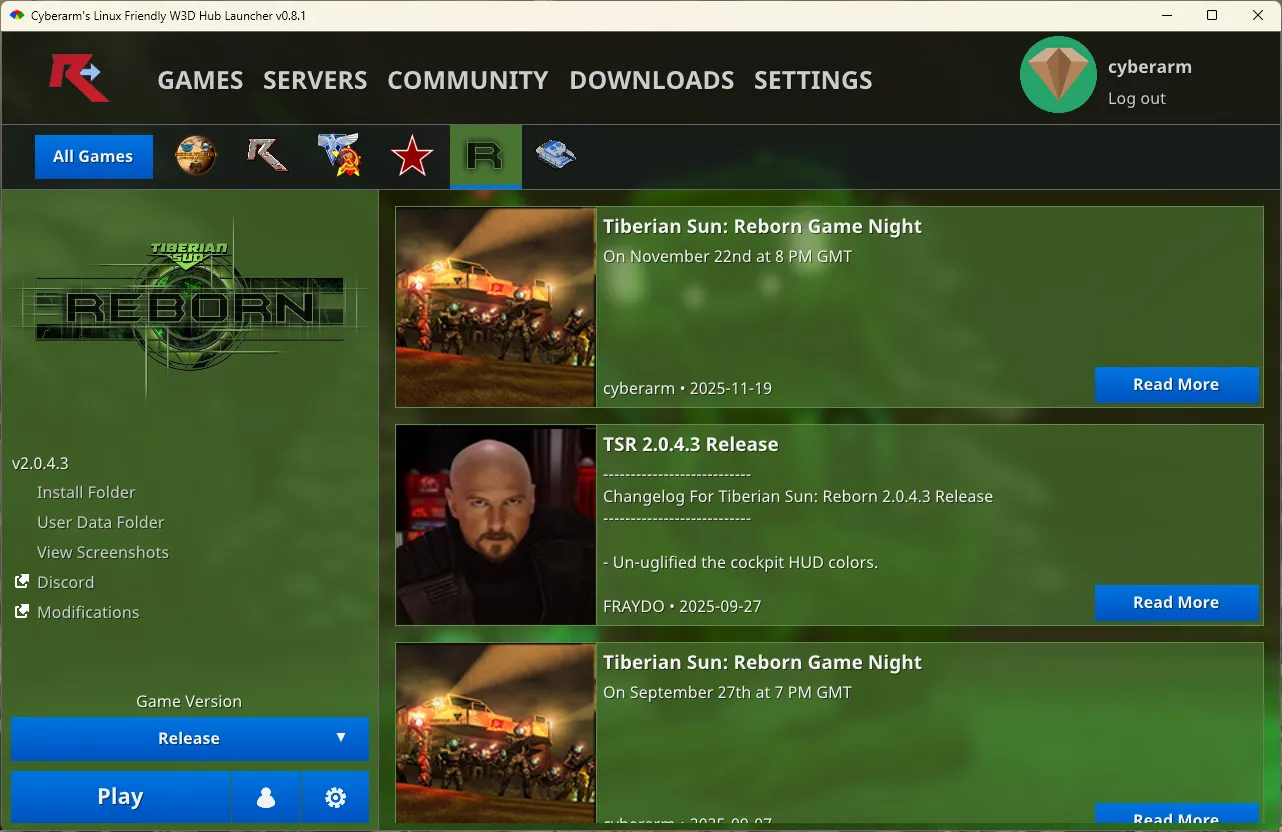

# Cyberarm's Linux Friendly W3D Hub Launcher

|

# Cyberarm's Linux Friendly W3D Hub Launcher

|

||||||

It runs natively on Linux! No mucking about trying to get .NET 4.6.1 or something installed in wine.

|

It runs natively on Linux! No mucking about trying to get .NET 4.6.1 or something installed in wine.

|

||||||

Only requires OpenGL, Ruby, and a few gems.

|

Only requires OpenGL, Ruby, and a few gems.

|

||||||

|

|

||||||

## Installing

|

## Download

|

||||||

|

[Download pre-built binaries.](https://github.com/cyberarm/w3d_hub_linux_launcher/releases)

|

||||||

|

|

||||||

|

## Development

|

||||||

|

|

||||||

|

### Installing

|

||||||

* Install Ruby 3.4+, from your package manager.

|

* Install Ruby 3.4+, from your package manager.

|

||||||

* Install Gosu's [dependencies](https://github.com/gosu/gosu/wiki/Getting-Started-on-Linux).

|

* Install Gosu's [dependencies](https://github.com/gosu/gosu/wiki/Getting-Started-on-Linux).

|

||||||

* Install required gems: `bundle install`

|

* Install required gems: `bundle install`

|

||||||

|

|

||||||

## Usage

|

### Usage

|

||||||

`ruby w3d_hub_linux_launcher.rb`

|

`ruby w3d_hub_linux_launcher.rb`

|

||||||

|

|

||||||

## Contributing

|

### Contributing

|

||||||

Contributors welcome, especially if anyone can lend a hand at reducing patching memory usage.

|

Contributors welcome.

|

||||||

|

|||||||

128

lib/api.rb

128

lib/api.rb

@@ -10,35 +10,37 @@ class W3DHub

|

|||||||

|

|

||||||

API_TIMEOUT = 30 # seconds

|

API_TIMEOUT = 30 # seconds

|

||||||

USER_AGENT = "Cyberarm's Linux Friendly W3D Hub Launcher v#{W3DHub::VERSION}".freeze

|

USER_AGENT = "Cyberarm's Linux Friendly W3D Hub Launcher v#{W3DHub::VERSION}".freeze

|

||||||

DEFAULT_HEADERS = {

|

DEFAULT_HEADERS = [

|

||||||

"User-Agent": USER_AGENT,

|

["user-agent", USER_AGENT],

|

||||||

"Accept": "application/json"

|

["accept", "application/json"]

|

||||||

}.freeze

|

].freeze

|

||||||

FORM_ENCODED_HEADERS = {

|

FORM_ENCODED_HEADERS = [

|

||||||

"User-Agent": USER_AGENT,

|

["user-agent", USER_AGENT],

|

||||||

"Accept": "application/json",

|

["accept", "application/json"],

|

||||||

"Content-Type": "application/x-www-form-urlencoded"

|

["content-type", "application/x-www-form-urlencoded"]

|

||||||

}.freeze

|

].freeze

|

||||||

|

|

||||||

def self.on_thread(method, *args, &callback)

|

def self.on_thread(method, *args, &callback)

|

||||||

BackgroundWorker.foreground_job(-> { Api.send(method, *args) }, callback)

|

BackgroundWorker.foreground_job(-> { Api.send(method, *args) }, callback)

|

||||||

end

|

end

|

||||||

|

|

||||||

class DummyResponse

|

class Response

|

||||||

def initialize(error)

|

def initialize(error: nil, status: -1, body: "")

|

||||||

|

@status = status

|

||||||

|

@body = body

|

||||||

@error = error

|

@error = error

|

||||||

end

|

end

|

||||||

|

|

||||||

def success?

|

def success?

|

||||||

false

|

@status == 200

|

||||||

end

|

end

|

||||||

|

|

||||||

def status

|

def status

|

||||||

-1

|

@status

|

||||||

end

|

end

|

||||||

|

|

||||||

def body

|

def body

|

||||||

""

|

@body

|

||||||

end

|

end

|

||||||

|

|

||||||

def error

|

def error

|

||||||

@@ -48,103 +50,60 @@ class W3DHub

|

|||||||

|

|

||||||

#! === W3D Hub API === !#

|

#! === W3D Hub API === !#

|

||||||

W3DHUB_API_ENDPOINT = "https://secure.w3dhub.com".freeze # "https://example.com" # "http://127.0.0.1:9292".freeze #

|

W3DHUB_API_ENDPOINT = "https://secure.w3dhub.com".freeze # "https://example.com" # "http://127.0.0.1:9292".freeze #

|

||||||

W3DHUB_API_CONNECTION = Excon.new(W3DHUB_API_ENDPOINT, persistent: true)

|

|

||||||

|

|

||||||

ALT_W3DHUB_API_ENDPOINT = "https://w3dhub-api.w3d.cyberarm.dev".freeze # "https://secure.w3dhub.com".freeze # "https://example.com" # "http://127.0.0.1:9292".freeze #

|

ALT_W3DHUB_API_ENDPOINT = "https://w3dhub-api.w3d.cyberarm.dev".freeze # "https://secure.w3dhub.com".freeze # "https://example.com" # "http://127.0.0.1:9292".freeze #

|

||||||

ALT_W3DHUB_API_API_CONNECTION = Excon.new(ALT_W3DHUB_API_ENDPOINT, persistent: true)

|

|

||||||

|

|

||||||

def self.excon(method, url, headers = DEFAULT_HEADERS, body = nil, backend = :w3dhub)

|

def self.async_http(method, url, headers = DEFAULT_HEADERS, body = nil, backend = :w3dhub)

|

||||||

case backend

|

case backend

|

||||||

when :w3dhub

|

when :w3dhub

|

||||||

connection = W3DHUB_API_CONNECTION

|

|

||||||

endpoint = W3DHUB_API_ENDPOINT

|

endpoint = W3DHUB_API_ENDPOINT

|

||||||

when :alt_w3dhub

|

when :alt_w3dhub

|

||||||

connection = ALT_W3DHUB_API_API_CONNECTION

|

|

||||||

endpoint = ALT_W3DHUB_API_ENDPOINT

|

endpoint = ALT_W3DHUB_API_ENDPOINT

|

||||||

when :gsh

|

when :gsh

|

||||||

connection = GSH_CONNECTION

|

|

||||||

endpoint = SERVER_LIST_ENDPOINT

|

endpoint = SERVER_LIST_ENDPOINT

|

||||||

end

|

end

|

||||||

|

|

||||||

logger.debug(LOG_TAG) { "Fetching #{method.to_s.upcase} \"#{endpoint}#{url}\"..." }

|

url = "#{endpoint}#{url}" unless url.start_with?("http")

|

||||||

|

|

||||||

|

logger.debug(LOG_TAG) { "Fetching #{method.to_s.upcase} \"#{url}\"..." }

|

||||||

|

|

||||||

# Inject Authorization header if account data is populated

|

# Inject Authorization header if account data is populated

|

||||||

if Store.account

|

if Store.account

|

||||||

logger.debug(LOG_TAG) { " Injecting Authorization header..." }

|

logger.debug(LOG_TAG) { " Injecting Authorization header..." }

|

||||||

headers = headers.dup

|

headers = headers.dup

|

||||||

headers["Authorization"] = "Bearer #{Store.account.access_token}"

|

headers << ["authorization", "Bearer #{Store.account.access_token}"]

|

||||||

end

|

end

|

||||||

|

|

||||||

begin

|

Sync do

|

||||||

connection.send(

|

begin

|

||||||

method,

|

response = Async::HTTP::Internet.send(method, url, headers, body)

|

||||||

path: url.sub(endpoint, ""),

|

|

||||||

headers: headers,

|

|

||||||

body: body,

|

|

||||||

nonblock: true,

|

|

||||||

tcp_nodelay: true,

|

|

||||||

write_timeout: API_TIMEOUT,

|

|

||||||

read_timeout: API_TIMEOUT,

|

|

||||||

connect_timeout: API_TIMEOUT,

|

|

||||||

idempotent: true,

|

|

||||||

retry_limit: 3,

|

|

||||||

retry_interval: 1,

|

|

||||||

retry_errors: [Excon::Error::Socket, Excon::Error::HTTPStatus] # Don't retry on timeout

|

|

||||||

)

|

|

||||||

rescue Excon::Error::Timeout => e

|

|

||||||

logger.error(LOG_TAG) { "Connection to \"#{url}\" timed out after: #{API_TIMEOUT} seconds" }

|

|

||||||

|

|

||||||

DummyResponse.new(e)

|

Response.new(status: response.status, body: response.read)

|

||||||

rescue Excon::Error => e

|

rescue Async::TimeoutError => e

|

||||||

logger.error(LOG_TAG) { "Connection to \"#{url}\" errored:" }

|

logger.error(LOG_TAG) { "Connection to \"#{url}\" timed out after: #{API_TIMEOUT} seconds" }

|

||||||

logger.error(LOG_TAG) { e }

|

|

||||||

|

|

||||||

DummyResponse.new(e)

|

Response.new(error: e)

|

||||||

|

rescue StandardError => e

|

||||||

|

logger.error(LOG_TAG) { "Connection to \"#{url}\" errored:" }

|

||||||

|

logger.error(LOG_TAG) { e }

|

||||||

|

|

||||||

|

Response.new(error: e)

|

||||||

|

ensure

|

||||||

|

response&.close

|

||||||

|

end

|

||||||

end

|

end

|

||||||

end

|

end

|

||||||

|

|

||||||

def self.post(url, headers = DEFAULT_HEADERS, body = nil, backend = :w3dhub)

|

def self.post(url, headers = DEFAULT_HEADERS, body = nil, backend = :w3dhub)

|

||||||

excon(:post, url, headers, body, backend)

|

async_http(:post, url, headers, body, backend)

|

||||||

end

|

end

|

||||||

|

|

||||||

def self.get(url, headers = DEFAULT_HEADERS, body = nil, backend = :w3dhub)

|

def self.get(url, headers = DEFAULT_HEADERS, body = nil, backend = :w3dhub)

|

||||||

excon(:get, url, headers, body, backend)

|

async_http(:get, url, headers, body, backend)

|

||||||

end

|

end

|

||||||

|

|

||||||

# Api.get but handles any URL instead of known hosts

|

# Api.get but handles any URL instead of known hosts

|

||||||

def self.fetch(url, headers = DEFAULT_HEADERS, body = nil, backend = nil)

|

def self.fetch(url, headers = DEFAULT_HEADERS, body = nil, backend = nil)

|

||||||

uri = URI(url)

|

async_http(:get, url, headers, body, backend)

|

||||||

|

|

||||||

# Use Api.get for `W3DHUB_API_ENDPOINT` URL's to exploit keep alive and connection reuse (faster responses)

|

|

||||||

return excon(:get, url, headers, body, backend) if "#{uri.scheme}://#{uri.host}" == W3DHUB_API_ENDPOINT

|

|

||||||

|

|

||||||

logger.debug(LOG_TAG) { "Fetching GET \"#{url}\"..." }

|

|

||||||

|

|

||||||

begin

|

|

||||||

Excon.get(

|

|

||||||

url,

|

|

||||||

headers: headers,

|

|

||||||

body: body,

|

|

||||||

nonblock: true,

|

|

||||||

tcp_nodelay: true,

|

|

||||||

write_timeout: API_TIMEOUT,

|

|

||||||

read_timeout: API_TIMEOUT,

|

|

||||||

connect_timeout: API_TIMEOUT,

|

|

||||||

idempotent: true,

|

|

||||||

retry_limit: 3,

|

|

||||||

retry_interval: 1,

|

|

||||||

retry_errors: [Excon::Error::Socket, Excon::Error::HTTPStatus] # Don't retry on timeout

|

|

||||||

)

|

|

||||||

rescue Excon::Error::Timeout => e

|

|

||||||

logger.error(LOG_TAG) { "Connection to \"#{url}\" timed out after: #{API_TIMEOUT} seconds" }

|

|

||||||

|

|

||||||

DummyResponse.new(e)

|

|

||||||

rescue Excon::Error => e

|

|

||||||

logger.error(LOG_TAG) { "Connection to \"#{url}\" errored:" }

|

|

||||||

logger.error(LOG_TAG) { e }

|

|

||||||

|

|

||||||

DummyResponse.new(e)

|

|

||||||

end

|

|

||||||

end

|

end

|

||||||

|

|

||||||

# Method: POST

|

# Method: POST

|

||||||

@@ -163,7 +122,7 @@ class W3DHub

|

|||||||

# On a failed login the service responds with:

|

# On a failed login the service responds with:

|

||||||

# {"error":"login-failed"}

|

# {"error":"login-failed"}

|

||||||

def self.refresh_user_login(refresh_token, backend = :w3dhub)

|

def self.refresh_user_login(refresh_token, backend = :w3dhub)

|

||||||

body = "data=#{JSON.dump({refreshToken: refresh_token})}"

|

body = URI.encode_www_form("data": JSON.dump({refreshToken: refresh_token}))

|

||||||

response = post("/apis/launcher/1/user-login", FORM_ENCODED_HEADERS, body, backend)

|

response = post("/apis/launcher/1/user-login", FORM_ENCODED_HEADERS, body, backend)

|

||||||

|

|

||||||

if response.status == 200

|

if response.status == 200

|

||||||

@@ -183,7 +142,7 @@ class W3DHub

|

|||||||

|

|

||||||

# See #user_refresh_token

|

# See #user_refresh_token

|

||||||

def self.user_login(username, password, backend = :w3dhub)

|

def self.user_login(username, password, backend = :w3dhub)

|

||||||

body = "data=#{JSON.dump({username: username, password: password})}"

|

body = URI.encode_www_form("data": JSON.dump({username: username, password: password}))

|

||||||

response = post("/apis/launcher/1/user-login", FORM_ENCODED_HEADERS, body, backend)

|

response = post("/apis/launcher/1/user-login", FORM_ENCODED_HEADERS, body, backend)

|

||||||

|

|

||||||

if response.status == 200

|

if response.status == 200

|

||||||

@@ -205,7 +164,7 @@ class W3DHub

|

|||||||

#

|

#

|

||||||

# Response: avatar-uri (Image download uri), id, username

|

# Response: avatar-uri (Image download uri), id, username

|

||||||

def self.user_details(id, backend = :w3dhub)

|

def self.user_details(id, backend = :w3dhub)

|

||||||

body = "data=#{JSON.dump({ id: id })}"

|

body = URI.encode_www_form("data": JSON.dump({ id: id }))

|

||||||

user_details = post("/apis/w3dhub/1/get-user-details", FORM_ENCODED_HEADERS, body, backend)

|

user_details = post("/apis/w3dhub/1/get-user-details", FORM_ENCODED_HEADERS, body, backend)

|

||||||

|

|

||||||

if user_details.status == 200

|

if user_details.status == 200

|

||||||

@@ -322,7 +281,7 @@ class W3DHub

|

|||||||

# Client requests news for a specific application/game e.g.: data={"category":"ia"} ("launcher-home" retrieves the weekly hub updates)

|

# Client requests news for a specific application/game e.g.: data={"category":"ia"} ("launcher-home" retrieves the weekly hub updates)

|

||||||

# Response is a JSON hash with a "highlighted" and "news" keys; the "news" one seems to be the desired one

|

# Response is a JSON hash with a "highlighted" and "news" keys; the "news" one seems to be the desired one

|

||||||

def self.news(category, backend = :w3dhub)

|

def self.news(category, backend = :w3dhub)

|

||||||

body = "data=#{JSON.dump({category: category})}"

|

body = URI.encode_www_form("data": JSON.dump({category: category}))

|

||||||

response = post("/apis/w3dhub/1/get-news", FORM_ENCODED_HEADERS, body, backend)

|

response = post("/apis/w3dhub/1/get-news", FORM_ENCODED_HEADERS, body, backend)

|

||||||

|

|

||||||

if response.status == 200

|

if response.status == 200

|

||||||

@@ -383,7 +342,6 @@ class W3DHub

|

|||||||

# SERVER_LIST_ENDPOINT = "https://gsh.w3dhub.com".freeze

|

# SERVER_LIST_ENDPOINT = "https://gsh.w3dhub.com".freeze

|

||||||

SERVER_LIST_ENDPOINT = "https://gsh.w3d.cyberarm.dev".freeze

|

SERVER_LIST_ENDPOINT = "https://gsh.w3d.cyberarm.dev".freeze

|

||||||

# SERVER_LIST_ENDPOINT = "http://127.0.0.1:9292".freeze

|

# SERVER_LIST_ENDPOINT = "http://127.0.0.1:9292".freeze

|

||||||

GSH_CONNECTION = Excon.new(SERVER_LIST_ENDPOINT, persistent: true)

|

|

||||||

|

|

||||||

# Method: GET

|

# Method: GET

|

||||||

# FORMAT: JSON

|

# FORMAT: JSON

|

||||||

|

|||||||

@@ -23,20 +23,24 @@ class W3DHub

|

|||||||

end

|

end

|

||||||

|

|

||||||

def run

|

def run

|

||||||

|

return

|

||||||

|

|

||||||

Thread.new do

|

Thread.new do

|

||||||

begin

|

Sync do |task|

|

||||||

connect

|

begin

|

||||||

|

async_connect(task)

|

||||||

|

|

||||||

while W3DHub::BackgroundWorker.alive?

|

while W3DHub::BackgroundWorker.alive?

|

||||||

connect if @auto_reconnect

|

async_connect(task) if @auto_reconnect

|

||||||

sleep 1

|

sleep 1

|

||||||

|

end

|

||||||

|

rescue => e

|

||||||

|

puts e

|

||||||

|

puts e.backtrace

|

||||||

|

|

||||||

|

sleep 30

|

||||||

|

retry

|

||||||

end

|

end

|

||||||

rescue => e

|

|

||||||

puts e

|

|

||||||

puts e.backtrace

|

|

||||||

|

|

||||||

sleep 30

|

|

||||||

retry

|

|

||||||

end

|

end

|

||||||

end

|

end

|

||||||

|

|

||||||

@@ -44,6 +48,39 @@ class W3DHub

|

|||||||

@@instance = nil

|

@@instance = nil

|

||||||

end

|

end

|

||||||

|

|

||||||

|

def async_connect(task)

|

||||||

|

@auto_reconnect = false

|

||||||

|

|

||||||

|

logger.debug(LOG_TAG) { "Requesting connection token..." }

|

||||||

|

response = Api.post("/listings/push/v2/negotiate?negotiateVersion=1", Api::DEFAULT_HEADERS, "", :gsh)

|

||||||

|

|

||||||

|

if response.status != 200

|

||||||

|

@auto_reconnect = true

|

||||||

|

return

|

||||||

|

end

|

||||||

|

|

||||||

|

data = JSON.parse(response.body, symbolize_names: true)

|

||||||

|

|

||||||

|

@invocation_id = 0 if @invocation_id > 9095

|

||||||

|

id = data[:connectionToken]

|

||||||

|

endpoint = "#{Api::SERVER_LIST_ENDPOINT}/listings/push/v2?id=#{id}"

|

||||||

|

|

||||||

|

logger.debug(LOG_TAG) { "Connecting to websocket..." }

|

||||||

|

|

||||||

|

Async::WebSocket::Client.connect(Async::HTTP::Endpoint.parse(endpoint)) do |connection|

|

||||||

|

logger.debug(LOG_TAG) { "Requesting json protocol, v1..." }

|

||||||

|

async_websocket_send(connection, { protocol: "json", version: 1 }.to_json)

|

||||||

|

end

|

||||||

|

end

|

||||||

|

|

||||||

|

def async_websocket_send(connection, payload)

|

||||||

|

connection.write("#{payload}\x1e")

|

||||||

|

connection.flush

|

||||||

|

end

|

||||||

|

|

||||||

|

def async_websocket_read(connection, payload)

|

||||||

|

end

|

||||||

|

|

||||||

def connect

|

def connect

|

||||||

@auto_reconnect = false

|

@auto_reconnect = false

|

||||||

|

|

||||||

|

|||||||

@@ -739,34 +739,51 @@ class W3DHub

|

|||||||

temp_file_path = normalize_path(manifest_file.name, temp_path)

|

temp_file_path = normalize_path(manifest_file.name, temp_path)

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Loading #{temp_file_path}.patch..." }

|

logger.info(LOG_TAG) { " Loading #{temp_file_path}.patch..." }

|

||||||

patch_mix = W3DHub::Mixer::Reader.new(file_path: "#{temp_file_path}.patch", ignore_crc_mismatches: false)

|

patch_mix = W3DHub::WWMix.new(path: "#{temp_file_path}.patch")

|

||||||

patch_info = JSON.parse(patch_mix.package.files.find { |f| f.name.casecmp?(".w3dhub.patch") || f.name.casecmp?(".bhppatch") }.data, symbolize_names: true)

|

unless patch_mix.load

|

||||||

|

raise patch_mix.error_reason

|

||||||

|

end

|

||||||

|

patch_entry = patch_mix.entries.find { |e| e.name.casecmp?(".w3dhub.patch") || e.name.casecmp?(".bhppatch") }

|

||||||

|

patch_entry.read

|

||||||

|

|

||||||

|

patch_info = JSON.parse(patch_entry.blob, symbolize_names: true)

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Loading #{file_path}..." }

|

logger.info(LOG_TAG) { " Loading #{file_path}..." }

|

||||||

target_mix = W3DHub::Mixer::Reader.new(file_path: "#{file_path}", ignore_crc_mismatches: false)

|

target_mix = W3DHub::WWMix.new(path: "#{file_path}")

|

||||||

|

unless target_mix.load

|

||||||

|

raise target_mix.error_reason

|

||||||

|

end

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Removing files..." } if patch_info[:removedFiles].size.positive?

|

logger.info(LOG_TAG) { " Removing files..." } if patch_info[:removedFiles].size.positive?

|

||||||

patch_info[:removedFiles].each do |file|

|

patch_info[:removedFiles].each do |file|

|

||||||

logger.debug(LOG_TAG) { " #{file}" }

|

logger.debug(LOG_TAG) { " #{file}" }

|

||||||

target_mix.package.files.delete_if { |f| f.name.casecmp?(file) }

|

target_mix.entries.delete_if { |e| e.name.casecmp?(file) }

|

||||||

end

|

end

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Adding/Updating files..." } if patch_info[:updatedFiles].size.positive?

|

logger.info(LOG_TAG) { " Adding/Updating files..." } if patch_info[:updatedFiles].size.positive?

|

||||||

patch_info[:updatedFiles].each do |file|

|

patch_info[:updatedFiles].each do |file|

|

||||||

logger.debug(LOG_TAG) { " #{file}" }

|

logger.debug(LOG_TAG) { " #{file}" }

|

||||||

|

|

||||||

patch = patch_mix.package.files.find { |f| f.name.casecmp?(file) }

|

patch = patch_mix.entries.find { |e| e.name.casecmp?(file) }

|

||||||

target = target_mix.package.files.find { |f| f.name.casecmp?(file) }

|

target = target_mix.entries.find { |e| e.name.casecmp?(file) }

|

||||||

|

|

||||||

if target

|

if target

|

||||||

target_mix.package.files[target_mix.package.files.index(target)] = patch

|

target_mix.entries[target_mix.entries.index(target)] = patch

|

||||||

else

|

else

|

||||||

target_mix.package.files << patch

|

target_mix.entries << patch

|

||||||

end

|

end

|

||||||

end

|

end

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Writing updated #{file_path}..." } if patch_info[:updatedFiles].size.positive?

|

logger.info(LOG_TAG) { " Writing updated #{file_path}..." } if patch_info[:updatedFiles].size.positive?

|

||||||

W3DHub::Mixer::Writer.new(file_path: "#{file_path}", package: target_mix.package, memory_buffer: true, encrypted: target_mix.encrypted?)

|

temp_mix_path = "#{temp_path}/#{File.basename(file_path)}"

|

||||||

|

temp_mix = W3DHub::WWMix.new(path: temp_mix_path)

|

||||||

|

target_mix.entries.each { |e| temp_mix.add_entry(entry: e) }

|

||||||

|

unless temp_mix.save

|

||||||

|

raise temp_mix.error_reason

|

||||||

|

end

|

||||||

|

|

||||||

|

# Overwrite target mix with temp mix

|

||||||

|

FileUtils.mv(temp_mix_path, file_path)

|

||||||

|

|

||||||

FileUtils.remove_dir(temp_path)

|

FileUtils.remove_dir(temp_path)

|

||||||

|

|

||||||

|

|||||||

121

lib/cache.rb

121

lib/cache.rb

@@ -50,54 +50,16 @@ class W3DHub

|

|||||||

end

|

end

|

||||||

|

|

||||||

# Download a W3D Hub package

|

# Download a W3D Hub package

|

||||||

# TODO: More work needed to make this work reliably

|

def self.async_fetch_package(package, block)

|

||||||

def self._async_fetch_package(package, block)

|

|

||||||

path = package_path(package.category, package.subcategory, package.name, package.version)

|

|

||||||

headers = Api::FORM_ENCODED_HEADERS

|

|

||||||

start_from_bytes = package.custom_partially_valid_at_bytes

|

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Start from bytes: #{start_from_bytes} of #{package.size}" }

|

|

||||||

|

|

||||||

create_directories(path)

|

|

||||||

|

|

||||||

file = File.open(path, start_from_bytes.positive? ? "r+b" : "wb")

|

|

||||||

|

|

||||||

if start_from_bytes.positive?

|

|

||||||

headers = Api::FORM_ENCODED_HEADERS + [["Range", "bytes=#{start_from_bytes}-"]]

|

|

||||||

file.pos = start_from_bytes

|

|

||||||

end

|

|

||||||

|

|

||||||

body = "data=#{JSON.dump({ category: package.category, subcategory: package.subcategory, name: package.name, version: package.version })}"

|

|

||||||

|

|

||||||

response = Api.post("/apis/launcher/1/get-package", headers, body)

|

|

||||||

|

|

||||||

total_bytes = package.size

|

|

||||||

remaining_bytes = total_bytes - start_from_bytes

|

|

||||||

|

|

||||||

response.each do |chunk|

|

|

||||||

file.write(chunk)

|

|

||||||

|

|

||||||

remaining_bytes -= chunk.size

|

|

||||||

|

|

||||||

block.call(chunk, remaining_bytes, total_bytes)

|

|

||||||

end

|

|

||||||

|

|

||||||

response.status == 200

|

|

||||||

ensure

|

|

||||||

file&.close

|

|

||||||

end

|

|

||||||

|

|

||||||

# Download a W3D Hub package

|

|

||||||

def self.fetch_package(package, block)

|

|

||||||

endpoint_download_url = package.download_url || "#{Api::W3DHUB_API_ENDPOINT}/apis/launcher/1/get-package"

|

endpoint_download_url = package.download_url || "#{Api::W3DHUB_API_ENDPOINT}/apis/launcher/1/get-package"

|

||||||

if package.download_url

|

if package.download_url

|

||||||

uri_path = package.download_url.split("/").last

|

uri_path = package.download_url.split("/").last

|

||||||

endpoint_download_url = package.download_url.sub(uri_path, URI.encode_uri_component(uri_path))

|

endpoint_download_url = package.download_url.sub(uri_path, URI.encode_uri_component(uri_path))

|

||||||

end

|

end

|

||||||

path = package_path(package.category, package.subcategory, package.name, package.version)

|

path = package_path(package.category, package.subcategory, package.name, package.version)

|

||||||

headers = { "Content-Type": "application/x-www-form-urlencoded", "User-Agent": Api::USER_AGENT }

|

headers = [["content-type", "application/x-www-form-urlencoded"], ["user-agent", Api::USER_AGENT]]

|

||||||

headers["Authorization"] = "Bearer #{Store.account.access_token}" if Store.account && !package.download_url

|

headers << ["authorization", "Bearer #{Store.account.access_token}"] if Store.account && !package.download_url

|

||||||

body = "data=#{JSON.dump({ category: package.category, subcategory: package.subcategory, name: package.name, version: package.version })}"

|

body = URI.encode_www_form("data": JSON.dump({ category: package.category, subcategory: package.subcategory, name: package.name, version: package.version }))

|

||||||

start_from_bytes = package.custom_partially_valid_at_bytes

|

start_from_bytes = package.custom_partially_valid_at_bytes

|

||||||

|

|

||||||

logger.info(LOG_TAG) { " Start from bytes: #{start_from_bytes} of #{package.size}" }

|

logger.info(LOG_TAG) { " Start from bytes: #{start_from_bytes} of #{package.size}" }

|

||||||

@@ -107,54 +69,63 @@ class W3DHub

|

|||||||

file = File.open(path, start_from_bytes.positive? ? "r+b" : "wb")

|

file = File.open(path, start_from_bytes.positive? ? "r+b" : "wb")

|

||||||

|

|

||||||

if start_from_bytes.positive?

|

if start_from_bytes.positive?

|

||||||

headers["Range"] = "bytes=#{start_from_bytes}-"

|

headers << ["range", "bytes=#{start_from_bytes}-"]

|

||||||

file.pos = start_from_bytes

|

file.pos = start_from_bytes

|

||||||

end

|

end

|

||||||

|

|

||||||

streamer = lambda do |chunk, remaining_bytes, total_bytes|

|

result = false

|

||||||

file.write(chunk)

|

Sync do

|

||||||

|

response = nil

|

||||||

|

|

||||||

block.call(chunk, remaining_bytes, total_bytes)

|

Async::HTTP::Internet.send(package.download_url ? :get : :post, endpoint_download_url, headers, body) do |r|

|

||||||

end

|

response = r

|

||||||

|

if r.success?

|

||||||

|

total_bytes = package.size

|

||||||

|

|

||||||

# Create a new connection due to some weirdness somewhere in Excon

|

r.each do |chunk|

|

||||||

response = Excon.send(

|

file.write(chunk)

|

||||||

package.download_url ? :get : :post,

|

|

||||||

endpoint_download_url,

|

|

||||||

tcp_nodelay: true,

|

|

||||||

headers: headers,

|

|

||||||

body: package.download_url ? "" : body,

|

|

||||||

chunk_size: 50_000,

|

|

||||||

response_block: streamer,

|

|

||||||

middlewares: Excon.defaults[:middlewares] + [Excon::Middleware::RedirectFollower]

|

|

||||||

)

|

|

||||||

|

|

||||||

if response.status == 200 || response.status == 206

|

block.call(chunk, total_bytes - file.pos, total_bytes)

|

||||||

return true

|

end

|

||||||

else

|

|

||||||

|

result = true

|

||||||

|

end

|

||||||

|

end

|

||||||

|

|

||||||

|

if response.status == 200 || response.status == 206

|

||||||

|

result = true

|

||||||

|

else

|

||||||

|

logger.debug(LOG_TAG) { " Failed to retrieve package: (#{package.category}:#{package.subcategory}:#{package.name}:#{package.version})" }

|

||||||

|

logger.debug(LOG_TAG) { " Download URL: #{endpoint_download_url}, response: #{response&.status || -1}" }

|

||||||

|

|

||||||

|

result = false

|

||||||

|

end

|

||||||

|

rescue Async::Timeout => e

|

||||||

|

logger.error(LOG_TAG) { " Connection to \"#{endpoint_download_url}\" timed out after: #{W3DHub::Api::API_TIMEOUT} seconds" }

|

||||||

|

logger.error(LOG_TAG) { e }

|

||||||

logger.debug(LOG_TAG) { " Failed to retrieve package: (#{package.category}:#{package.subcategory}:#{package.name}:#{package.version})" }

|

logger.debug(LOG_TAG) { " Failed to retrieve package: (#{package.category}:#{package.subcategory}:#{package.name}:#{package.version})" }

|

||||||

logger.debug(LOG_TAG) { " Download URL: #{endpoint_download_url}, response: #{response&.status || -1}" }

|

logger.debug(LOG_TAG) { " Download URL: #{endpoint_download_url}, response: #{response&.status || -1}" }

|

||||||

|

|

||||||

return false

|

result = false

|

||||||

|

rescue StandardError => e

|

||||||

|

logger.error(LOG_TAG) { " Connection to \"#{endpoint_download_url}\" errored:" }

|

||||||

|

logger.error(LOG_TAG) { e }

|

||||||

|

logger.debug(LOG_TAG) { " Failed to retrieve package: (#{package.category}:#{package.subcategory}:#{package.name}:#{package.version})" }

|

||||||

|

logger.debug(LOG_TAG) { " Download URL: #{endpoint_download_url}, response: #{response&.status || -1}" }

|

||||||

|

|

||||||

|

result = false

|

||||||

end

|

end

|

||||||

rescue Excon::Error::Timeout => e

|

|

||||||

logger.error(LOG_TAG) { " Connection to \"#{endpoint_download_url}\" timed out after: #{W3DHub::Api::API_TIMEOUT} seconds" }

|

|

||||||

logger.error(LOG_TAG) { e }

|

|

||||||

logger.debug(LOG_TAG) { " Failed to retrieve package: (#{package.category}:#{package.subcategory}:#{package.name}:#{package.version})" }

|

|

||||||

logger.debug(LOG_TAG) { " Download URL: #{endpoint_download_url}, response: #{response&.status || -1}" }

|

|

||||||

|

|

||||||

return false

|

result

|

||||||

rescue Excon::Error => e

|

|

||||||

logger.error(LOG_TAG) { " Connection to \"#{endpoint_download_url}\" errored:" }

|

|

||||||

logger.error(LOG_TAG) { e }

|

|

||||||

logger.debug(LOG_TAG) { " Failed to retrieve package: (#{package.category}:#{package.subcategory}:#{package.name}:#{package.version})" }

|

|

||||||

logger.debug(LOG_TAG) { " Download URL: #{endpoint_download_url}, response: #{response&.status || -1}" }

|

|

||||||

|

|

||||||

return false

|

|

||||||

ensure

|

ensure

|

||||||

file&.close

|

file&.close

|

||||||

end

|

end

|

||||||

|

|

||||||

|

# Download a W3D Hub package

|

||||||

|

def self.fetch_package(package, block)

|

||||||

|

async_fetch_package(package, block)

|

||||||

|

end

|

||||||

|

|

||||||

def self.acquire_net_lock(key)

|

def self.acquire_net_lock(key)

|

||||||

Store["net_locks"] ||= {}

|

Store["net_locks"] ||= {}

|

||||||

|

|

||||||

|

|||||||

386

lib/mixer.rb

386

lib/mixer.rb

@@ -1,386 +0,0 @@

|

|||||||

require "digest"

|

|

||||||

require "stringio"

|

|

||||||

|

|

||||||

class W3DHub

|

|

||||||

|

|

||||||

# https://github.com/TheUnstoppable/MixLibrary used for reference

|

|

||||||

class Mixer

|

|

||||||

DEFAULT_BUFFER_SIZE = 32_000_000

|

|

||||||

MIX1_HEADER = 0x3158494D

|

|

||||||

MIX2_HEADER = 0x3258494D

|

|

||||||

|

|

||||||

class MixParserException < RuntimeError; end

|

|

||||||

class MixFormatException < RuntimeError; end

|

|

||||||

|

|

||||||

class MemoryBuffer

|

|

||||||

def initialize(file_path:, mode:, buffer_size:, encoding: Encoding::ASCII_8BIT)

|

|

||||||

@mode = mode

|

|

||||||

|

|

||||||

@file = File.open(file_path, mode == :read ? "rb" : "wb")

|

|

||||||

@file.pos = 0

|

|

||||||

@file_size = File.size(file_path)

|

|

||||||

|

|

||||||

@buffer_size = buffer_size

|

|

||||||

@chunk = 0

|

|

||||||

@last_chunk = 0

|

|

||||||

@max_chunks = @file_size / @buffer_size

|

|

||||||

@last_cached_chunk = nil

|

|

||||||

|

|

||||||

@encoding = encoding

|

|

||||||

|

|

||||||

@last_buffer_pos = 0

|

|

||||||

@buffer = @mode == :read ? StringIO.new(@file.read(@buffer_size)) : StringIO.new

|

|

||||||

@buffer.set_encoding(encoding)

|

|

||||||

|

|

||||||

# Cache frequently accessed chunks to reduce disk hits

|

|

||||||

@cache = {}

|

|

||||||

end

|

|

||||||

|

|

||||||

def pos

|

|

||||||

@chunk * @buffer_size + @buffer.pos

|

|

||||||

end

|

|

||||||

|

|

||||||

def pos=(offset)

|

|

||||||

last_chunk = @chunk

|

|

||||||

@chunk = offset / @buffer_size

|

|

||||||

|

|

||||||

raise "No backsies! #{offset} (#{@chunk}/#{last_chunk})" if @mode == :write && @chunk < last_chunk

|

|

||||||

|

|

||||||

fetch_chunk(@chunk) if @mode == :read

|

|

||||||

|

|

||||||

@buffer.pos = offset % @buffer_size

|

|

||||||

end

|

|

||||||

|

|

||||||

# string of bytes

|

|

||||||

def write(bytes)

|

|

||||||

length = bytes.length

|

|

||||||

|

|

||||||

# Crossing buffer boundry

|

|

||||||

if @buffer.pos + length > @buffer_size

|

|

||||||

|

|

||||||

edge_size = @buffer_size - @buffer.pos

|

|

||||||

buffer_edge = bytes[0...edge_size]

|

|

||||||

|

|

||||||

bytes_to_write = bytes.length - buffer_edge.length

|

|

||||||

chunks_to_write = (bytes_to_write / @buffer_size.to_f).ceil

|

|

||||||

bytes_written = buffer_edge.length

|

|

||||||

|

|

||||||

@buffer.write(buffer_edge)

|

|

||||||

flush_chunk

|

|

||||||

|

|

||||||

chunks_to_write.times do |i|

|

|

||||||

i += 1

|

|

||||||

|

|

||||||

@buffer.write(bytes[bytes_written...bytes_written + @buffer_size])

|

|

||||||

bytes_written += @buffer_size

|

|

||||||

|

|

||||||

flush_chunk if string.length == @buffer_size

|

|

||||||

end

|

|

||||||

else

|

|

||||||

@buffer.write(bytes)

|

|

||||||

end

|

|

||||||

|

|

||||||

bytes

|

|

||||||

end

|

|

||||||

|

|

||||||

def write_header(data_offset:, name_offset:)

|

|

||||||

flush_chunk

|

|

||||||

|

|

||||||

@file.pos = 4

|

|

||||||

write_i32(data_offset)

|

|

||||||

write_i32(name_offset)

|

|

||||||

|

|

||||||

@file.pos = 0

|

|

||||||

end

|

|

||||||

|

|

||||||

def write_i32(int)

|

|

||||||

@file.write([int].pack("l"))

|

|

||||||

end

|

|

||||||

|

|

||||||

def read(bytes = nil)

|

|

||||||

raise ArgumentError, "Cannot read whole file" if bytes.nil?

|

|

||||||

raise ArgumentError, "Cannot under read buffer" if bytes.negative?

|

|

||||||

|

|

||||||

# Long read, need to fetch next chunk while reading, mostly defeats this class...?

|

|

||||||

if @buffer.pos + bytes > buffered

|

|

||||||

buff = string[@buffer.pos..buffered]

|

|

||||||

|

|

||||||

bytes_to_read = bytes - buff.length

|

|

||||||

chunks_to_read = (bytes_to_read / @buffer_size.to_f).ceil

|

|

||||||

|

|

||||||

chunks_to_read.times do |i|

|

|

||||||

i += 1

|

|

||||||

|

|

||||||

fetch_chunk(@chunk + 1)

|

|

||||||

|

|

||||||

if i == chunks_to_read # read partial

|

|

||||||

already_read_bytes = (chunks_to_read - 1) * @buffer_size

|

|

||||||

bytes_more_to_read = bytes_to_read - already_read_bytes

|

|

||||||

|

|

||||||

buff << @buffer.read(bytes_more_to_read)

|

|

||||||

else

|

|

||||||

buff << @buffer.read

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

buff

|

|

||||||

else

|

|

||||||

fetch_chunk(@chunk) if @last_chunk != @chunk

|

|

||||||

|

|

||||||

@buffer.read(bytes)

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

def readbyte

|

|

||||||

fetch_chunk(@chunk + 1) if @buffer.pos + 1 > buffered

|

|

||||||

|

|

||||||

@buffer.readbyte

|

|

||||||

end

|

|

||||||

|

|

||||||

def fetch_chunk(chunk)

|

|

||||||

raise ArgumentError, "Cannot fetch chunk #{chunk}, only #{@max_chunks} exist!" if chunk > @max_chunks

|

|

||||||

@last_chunk = @chunk

|

|

||||||

@chunk = chunk

|

|

||||||

@last_buffer_pos = @buffer.pos

|

|

||||||

|

|

||||||

cached = @cache[chunk]

|

|

||||||

|

|

||||||

if cached

|

|

||||||

@buffer.string = cached

|

|

||||||

else

|

|

||||||

@file.pos = chunk * @buffer_size

|

|

||||||

buff = @buffer.string = @file.read(@buffer_size)

|

|

||||||

|

|

||||||

# Cache the active chunk (implementation bounces from @file_data_chunk and back to this for each 'file' processed)

|

|

||||||

if @chunk != @file_data_chunk && @chunk != @last_cached_chunk

|

|

||||||

@cache.delete(@last_cached_chunk) unless @last_cached_chunk == @file_data_chunk

|

|

||||||

@cache[@chunk] = buff

|

|

||||||

@last_cached_chunk = @chunk

|

|

||||||

end

|

|

||||||

|

|

||||||

buff

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

# This is accessed quite often, keep it around

|

|

||||||

def cache_file_data_chunk!

|

|

||||||

@file_data_chunk = @chunk

|

|

||||||

|

|

||||||

last_buffer_pos = @buffer.pos

|

|

||||||

@buffer.pos = 0

|

|

||||||

@cache[@chunk] = @buffer.read

|

|

||||||

@buffer.pos = last_buffer_pos

|

|

||||||

end

|

|

||||||

|

|

||||||

def flush_chunk

|

|

||||||

@last_chunk = @chunk

|

|

||||||

@chunk += 1

|

|

||||||

|

|

||||||

@file.pos = @last_chunk * @buffer_size

|

|

||||||

@file.write(string)

|

|

||||||

|

|

||||||

@buffer.string = "".force_encoding(@encoding)

|

|

||||||

end

|

|

||||||

|

|

||||||

def string

|

|

||||||

@buffer.string

|

|

||||||

end

|

|

||||||

|

|

||||||

def buffered

|

|

||||||

@buffer.string.length

|

|

||||||

end

|

|

||||||

|

|

||||||

def close

|

|

||||||

@file&.close

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

class Reader

|

|

||||||

attr_reader :package

|

|

||||||

|

|

||||||

def initialize(file_path:, ignore_crc_mismatches: false, metadata_only: false, buffer_size: DEFAULT_BUFFER_SIZE)

|

|

||||||

@package = Package.new

|

|

||||||

|

|

||||||

@buffer = MemoryBuffer.new(file_path: file_path, mode: :read, buffer_size: buffer_size)

|

|

||||||

|

|

||||||

@buffer.pos = 0

|

|

||||||

|

|

||||||

@encrypted = false

|

|

||||||

|

|

||||||

# Valid header

|

|

||||||

if (mime = read_i32) && (mime == MIX1_HEADER || mime == MIX2_HEADER)

|

|

||||||

@encrypted = mime == MIX2_HEADER

|

|

||||||

|

|

||||||

file_data_offset = read_i32

|

|

||||||

file_names_offset = read_i32

|

|

||||||

|

|

||||||

@buffer.pos = file_names_offset

|

|

||||||

file_count = read_i32

|

|

||||||

|

|

||||||

file_count.times do

|

|

||||||

@package.files << Package::File.new(name: read_string)

|

|

||||||

end

|

|

||||||

|

|

||||||

@buffer.pos = file_data_offset

|

|

||||||

@buffer.cache_file_data_chunk!

|

|

||||||

|

|

||||||

_file_count = read_i32

|

|

||||||

|

|

||||||

file_count.times do |i|

|

|

||||||

file = @package.files[i]

|

|

||||||

|

|

||||||

file.mix_crc = read_u32.to_s(16).rjust(8, "0")

|

|

||||||

file.content_offset = read_u32

|

|

||||||

file.content_length = read_u32

|

|

||||||

|

|

||||||

if !ignore_crc_mismatches && file.mix_crc != file.file_crc

|

|

||||||

raise MixParserException, "CRC mismatch for #{file.name}. #{file.mix_crc.inspect} != #{file.file_crc.inspect}"

|

|

||||||

end

|

|

||||||

|

|

||||||

pos = @buffer.pos

|

|

||||||

@buffer.pos = file.content_offset

|

|

||||||

file.data = @buffer.read(file.content_length) unless metadata_only

|

|

||||||

@buffer.pos = pos

|

|

||||||

end

|

|

||||||

else

|

|

||||||

raise MixParserException, "Invalid MIX file: Expected \"#{MIX1_HEADER}\" or \"#{MIX2_HEADER}\", got \"0x#{mime.to_s(16).upcase}\"\n(#{file_path})"

|

|

||||||

end

|

|

||||||

|

|

||||||

ensure

|

|

||||||

@buffer&.close

|

|

||||||

@buffer = nil # let GC collect

|

|

||||||

end

|

|

||||||

|

|

||||||

def read_i32

|

|

||||||

@buffer.read(4).unpack1("l")

|

|

||||||

end

|

|

||||||

|

|

||||||

def read_u32

|

|

||||||

@buffer.read(4).unpack1("L")

|

|

||||||

end

|

|

||||||

|

|

||||||

def read_string

|

|

||||||

buffer = ""

|

|

||||||

|

|

||||||

length = @buffer.readbyte

|

|

||||||

|

|

||||||

length.times do

|

|

||||||

buffer << @buffer.readbyte

|

|

||||||

end

|

|

||||||

|

|

||||||

buffer.strip

|

|

||||||

end

|

|

||||||

|

|

||||||

def encrypted?

|

|

||||||

@encrypted

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

class Writer

|

|

||||||

attr_reader :package

|

|

||||||

|

|

||||||

def initialize(file_path:, package:, memory_buffer: false, buffer_size: DEFAULT_BUFFER_SIZE, encrypted: false)

|

|

||||||

@package = package

|

|

||||||

|

|

||||||

@buffer = MemoryBuffer.new(file_path: file_path, mode: :write, buffer_size: buffer_size)

|

|

||||||

@buffer.pos = 0

|

|

||||||

|

|

||||||

@encrypted = encrypted

|

|

||||||

|

|

||||||

@buffer.write(encrypted? ? "MIX2" : "MIX1")

|

|

||||||

|

|

||||||

files = @package.files.sort { |a, b| a.file_crc <=> b.file_crc }

|

|

||||||

|

|

||||||

@buffer.pos = 16

|

|

||||||

|

|

||||||

files.each do |file|

|

|

||||||

file.content_offset = @buffer.pos

|

|

||||||

file.content_length = file.data.length

|

|

||||||

@buffer.write(file.data)

|

|

||||||

|

|

||||||

@buffer.pos += -@buffer.pos & 7

|

|

||||||

end

|

|

||||||

|

|

||||||

file_data_offset = @buffer.pos

|

|

||||||

write_i32(files.count)

|

|

||||||

|

|

||||||

files.each do |file|

|

|

||||||

write_u32(file.file_crc.to_i(16))

|

|

||||||

write_u32(file.content_offset)

|

|

||||||

write_u32(file.content_length)

|

|

||||||

end

|

|

||||||

|

|

||||||

file_name_offset = @buffer.pos

|

|

||||||

write_i32(files.count)

|

|

||||||

|

|

||||||

files.each do |file|

|

|

||||||

write_byte(file.name.length + 1)

|

|

||||||

@buffer.write("#{file.name}\0")

|

|

||||||

end

|

|

||||||

|

|

||||||

@buffer.write_header(data_offset: file_data_offset, name_offset: file_name_offset)

|

|

||||||

ensure

|

|

||||||

@buffer&.close

|

|

||||||

end

|

|

||||||

|

|

||||||

def write_i32(int)

|

|

||||||

@buffer.write([int].pack("l"))

|

|

||||||

end

|

|

||||||

|

|

||||||

def write_u32(uint)

|

|

||||||

@buffer.write([uint].pack("L"))

|

|

||||||

end

|

|

||||||

|

|

||||||

def write_byte(byte)

|

|

||||||

@buffer.write([byte].pack("c"))

|

|

||||||

end

|

|

||||||

|

|

||||||

def encrypted?

|

|

||||||

@encrypted

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

# Eager loads patch file and streams target file metadata (doen't load target file data or generate CRCs)

|

|

||||||

# after target file metadata is loaded, create a temp file and merge patched files into list then

|

|

||||||

# build ordered file list and stream patched files and target file chunks into temp file,

|

|

||||||

# after that is done, replace target file with temp file

|

|

||||||

class Patcher

|

|

||||||

def initialize(patch_files:, target_file:, temp_file:, buffer_size: DEFAULT_BUFFER_SIZE)

|

|

||||||

@patch_files = patch_files.to_a.map { |f| Reader.new(file_path: f) }

|

|

||||||

@target_file = File.open(target_file)

|

|

||||||

@temp_file = File.open(temp_file, "a+b")

|

|

||||||

@buffer_size = buffer_size

|

|

||||||

end

|

|

||||||

end

|

|

||||||

|

|

||||||

class Package

|

|

||||||

attr_reader :files

|

|

||||||

|

|

||||||

def initialize(files: [])

|

|

||||||

@files = files

|

|

||||||

end

|

|

||||||

|

|

||||||

class File

|

|

||||||

attr_accessor :name, :mix_crc, :content_offset, :content_length, :data

|

|

||||||

|

|

||||||

def initialize(name:, mix_crc: nil, content_offset: nil, content_length: nil, data: nil)

|

|

||||||

@name = name

|

|

||||||

@mix_crc = mix_crc

|

|

||||||

@content_offset = content_offset

|

|

||||||

@content_length = content_length

|

|

||||||

@data = data

|

|

||||||

end

|

|

||||||

|

|

||||||

def file_crc

|

|

||||||

return "e6fe46b8" if @name.downcase == ".w3dhub.patch"

|

|

||||||

|

|

||||||

Digest::CRC32.hexdigest(@name.upcase)

|

|

||||||

end

|

|

||||||

|

|

||||||

def data_crc

|

|

||||||

Digest::CRC32.hexdigest(@data)

|

|

||||||

end

|

|

||||||

end

|

|

||||||

end

|

|

||||||

end

|

|

||||||

end

|

|

||||||

280

lib/ww_mix.rb

Normal file

280

lib/ww_mix.rb

Normal file

@@ -0,0 +1,280 @@

|

|||||||

|

require "digest"

|

||||||

|

require "stringio"

|

||||||

|

|

||||||

|

class W3DHub

|

||||||

|

# Reimplementating MIX1 reader/writer with years more

|

||||||

|

# experience working with these formats and having then

|

||||||

|

# advantage of being able to reference the renegade source

|

||||||

|

# code :)

|

||||||

|

class WWMix

|

||||||

|

MIX1_HEADER = 0x3158494D

|

||||||

|

MIX2_HEADER = 0x3258494D

|

||||||

|

|

||||||

|

MixHeader = Struct.new(

|

||||||

|

:mime_type, # int32

|

||||||

|

:file_data_offset, # int32

|

||||||

|

:file_names_offset, # int32

|

||||||

|

:_reserved # int32

|

||||||

|

)

|

||||||

|

|

||||||

|

EntryInfoHeader = Struct.new(

|

||||||

|

:crc32, # uint32

|

||||||

|

:content_offset, # uint32

|

||||||

|

:content_length # uint32

|

||||||

|

)

|

||||||

|

|

||||||

|

class Entry

|

||||||

|

attr_accessor :path, :name, :info, :blob, :is_blob

|

||||||

|

|

||||||

|

def initialize(name:, path:, info:, blob: nil)

|

||||||

|

@name = name

|

||||||

|

@path = path

|

||||||

|

@info = info

|

||||||

|

@blob = blob

|

||||||

|

|

||||||

|

@info.content_length = blob.size if blob?

|

||||||

|

end

|

||||||

|

|

||||||

|

def blob?

|

||||||

|

@blob

|

||||||

|

end

|

||||||

|

|

||||||

|

def calculate_crc32

|

||||||

|

Digest::CRC32.hexdigest(@name.upcase).upcase.to_i(16)

|

||||||

|

end

|

||||||

|

|

||||||

|

# Write entry's data to stream.

|

||||||

|

# Caller is responsible for ensuring stream is valid for writing

|

||||||

|

def copy_to(stream)

|

||||||

|

if blob?

|

||||||

|

return false if @blob.size.zero?

|

||||||

|

|

||||||

|

stream.write(blob)

|

||||||

|

return true

|

||||||

|

else

|

||||||

|

if read

|

||||||

|

stream.write(@blob)

|

||||||

|

@blob = nil

|

||||||

|

return true

|

||||||

|

end

|

||||||

|

end

|

||||||

|

|

||||||

|

false

|

||||||

|

end

|

||||||

|

|

||||||

|

def read

|

||||||

|

return false unless File.exist?(@path)

|

||||||

|

return false if File.directory?(@path)

|

||||||

|

return false if File.size(@path) < @info.content_offset + @info.content_length

|

||||||

|

|

||||||

|

@blob = File.binread(@path, @info.content_length, @info.content_offset)

|

||||||

|

|

||||||

|

true

|

||||||

|

end

|

||||||

|

end

|

||||||

|

|

||||||

|

attr_reader :path, :encrypted, :entries, :error_reason

|

||||||

|

|

||||||

|

def initialize(path:, encrypted: false)

|

||||||

|

@path = path

|

||||||

|

@encrypted = encrypted

|

||||||

|

@entries = []

|

||||||

|

|

||||||

|

@error_reason = ""

|

||||||

|

end

|

||||||

|

|

||||||

|

# Load entries from MIX file. Entry data is NOT loaded.

|

||||||

|

# @return true on success or false on failure. Check m_error_reason for why.

|

||||||

|

def load

|

||||||

|

unless File.exist?(@path)

|

||||||

|

@error_reason = format("Path does not exist: %s", @path)

|

||||||

|

return false

|

||||||

|

end

|

||||||

|

|

||||||

|

if File.directory?(@path)

|

||||||

|

@error_reason = format("Path is a directory: %s", @path)

|

||||||

|

return false

|

||||||

|

end

|

||||||

|

|

||||||

|

File.open(@path, "rb") do |f|

|

||||||

|

header = MixHeader.new(0, 0, 0, 0)

|

||||||

|

header.mime_type = read_i32(f)

|

||||||

|

header.file_data_offset = read_i32(f)

|

||||||

|

header.file_names_offset = read_i32(f)

|

||||||

|

header._reserved = read_i32(f)

|

||||||

|

|

||||||

|

unless header.mime_type == MIX1_HEADER || header.mime_type == MIX2_HEADER

|

||||||

|

@error_reason = format("Invalid mime type: %d", header.mime_type)

|

||||||

|

return false

|

||||||

|

end

|

||||||

|

|

||||||

|

@encrypted = header.mime_type == MIX2_HEADER

|

||||||

|

|

||||||

|

# Read entry info

|

||||||

|

f.pos = header.file_data_offset

|

||||||

|

file_count = read_i32(f)

|

||||||

|

|

||||||

|

file_count.times do |i|

|

||||||

|

entry_info = EntryInfoHeader.new(0, 0, 0)

|

||||||

|

entry_info.crc32 = read_u32(f)

|

||||||

|

entry_info.content_offset = read_u32(f)

|

||||||

|

entry_info.content_length = read_u32(f)

|

||||||

|

|

||||||

|

@entries << Entry.new(name: "", path: @path, info: entry_info)

|

||||||

|

end

|

||||||

|

|

||||||

|

# Read entry names

|

||||||

|

f.pos = header.file_names_offset

|

||||||

|

file_count = read_i32(f)

|

||||||

|

|

||||||

|

file_count.times do |i|

|

||||||

|

@entries[i].name = read_string(f)

|

||||||

|

end

|

||||||

|

end

|

||||||

|

|

||||||

|

true

|

||||||

|

end

|

||||||

|

|

||||||

|

def save

|

||||||

|

unless @entries.size.positive?

|

||||||

|

@error_reason = "No entries to write."

|

||||||

|

return false

|

||||||

|

end

|

||||||

|

|

||||||

|

if File.directory?(@path)

|

||||||

|

@error_reason = format("Path is a directory: %s", @path)

|

||||||

|

return false

|

||||||

|

end

|

||||||

|

|

||||||

|

File.open(@path, "wb") do |f|

|

||||||

|

header = MixHeader.new(encrypted? ? MIX2_HEADER : MIX1_HEADER, 0, 0, 0)

|

||||||

|

|

||||||

|

# write mime type

|

||||||

|

write_i32(f, header.mime_type)

|

||||||

|

|

||||||

|

f.pos = 16

|

||||||

|

|

||||||

|

# sort entries by crc32 of their name

|

||||||

|

sort_entries

|

||||||

|

|

||||||

|

# write file blobs

|

||||||

|

@entries.each do |entry|

|

||||||

|

# store current io position

|

||||||

|

pos = f.pos